New version of Poll-n-Ping! was rolled out. With new features to help you spend more time on Poll-n-Ping! and a major bug fix in the user registration.

Ping

Ping Bloglines – Poll-n-Ping! exclusive

Bloglines is the latest service to be added to the list of services Poll-n-Ping! can ping. None of the multiple ping services that are out there has the ability to ping Bloglines. This brings the total number of services Poll-n-Ping! support to 20.

Some multiple blog ping services (e.g. Pingoat) have ping servers that are no longer existant. We at Poll-n-Ping! continuousy monitor the upstream ping services to ensure that they are live. We are also believe in being transparent, hence provide you will the result of each ping we make.

We are constantly trying to increase the number of services we ping. Do not forget to check your Poll-n-Ping! account regularly for the latest additions. If you do not already have a Poll-n-Ping!, you are not exploiting the maximum potential of your blog. Grab your self a free Poll-n-Ping! account without further dalay.

Ping more search engines than with Ping-o-Matic

Now you can ping more blog search engines at once than it was possible with Ping-o-Matic. Poll-n-Ping! added 3 more services today, bringing the number of services that will be pinged to 18 whil Ping-o-Matic only supports 16 services.

Poll-n-Ping! is the latest pinging service. Poll-n-Ping! is morethan just a pinging service, it monitors (polls) your blog for changes. Also provides notification, should you blog go down for some reason. All this comes for free, the best price ever.

Go grab your Poll-n-Ping! account now, you can forget about pinging and concentrate on blogging. Poll-n-Ping will take care of pinging blog search services.

Poll-n-Ping!, automagically ping 15+ services

Poll-n-Ping! added 8 more services today to the list of services that will be automatically pinged, bringing the number to 15. Register for a Poll-n-Ping! account, and put your blog details today and never bother about manually pinging when there are new posts in your blog; Poll-n-Ping! will take care of it all.

The number of services that Poll-n-Ping! supports will increase further in the near future. Don’t forget to create a Poll-n-Ping! account.

Poll-n-Ping, coz u r busy blogging

I would like to introduce a brand new service. It is a automated blog search directory pinging service named Poll-n-Ping. It is different from Ping-o-matic and similar services, because Poll-n-Ping monitors the blog (actually the feed) for changes and when it detects changes it will automatically ping the blog search directories.

You can checkout the service at http://www.mohanjith.net/pnp. All this comes free of charge, but donations are always welcome. Right now there is no limit on the number of blogs that can be monitored by a single user. If you want your blog to be submitted to all the blog search directories that we add support from time to time, you will have to visit Poll-n-Ping regularly.

Soon I plan to add alert service Poll-n-Ping, the subscribed users can receive notification mails or IM when content changes, blog goes offline, and/or blog comes online. However this will be a paid service unless I receive enough donations to support the hosting.

Poll-n-Ping has Turbogears under the hood :-).

Hope you will find the Poll-n-Ping service useful.

Automagically ping blog search engines

I wanted to automatically ping Technorati, Icerocket, and Google Blog Search, that means with no intervention the blog search engines should be pinged. I was alright with a delay of 15 minutes.

So I went about exploiting the XML-RPC services provided by the blog search engines. I came up with this python script. I set up a cron job to invoke the script every 15 minutes. See bellow for the source.

[sourcecode language=’py’]#!/usr/bin/python

import xmlrpclib

import urllib2

import os

from hashlib import md5

feed_url = ‘[Yorur feed url]’

blog_url = ‘[Your blog url]’

blog_name = ‘[Your blog name]’

hash_file_path = os.path.expanduser(“~/.blogger/”)

def main():

req = urllib2.Request(feed_url)

response = urllib2.urlopen(req)

feed = response.read()

hash_file_name = hash_file_path + md5(blog_url).hexdigest()

if os.path.exists(hash_file_name):

hash_file = open(hash_file_name, “r+”)

last_digest = hash_file.read(os.path.getsize(hash_file_name))

else:

hash_file = open(hash_file_name, “w”)

last_digest = ”

curr_digest = md5(feed).hexdigest()

if curr_digest != last_digest:

ping = Ping(blog_name, blog_url)

responses = ping.ping_all([‘icerocket’,’technorati’,’google’])

hash_file.write(curr_digest)

hash_file.close()

class Ping:

def __init__(self, blog_name, blog_url):

self.blog_name = blog_name

self.blog_url = blog_url

def ping_all(self, down_stream_services):

responses = []

for down_stream_service in down_stream_services:

method = eval(‘self._’ + down_stream_service)

responses.append(method.__call__())

return responses

def _icerocket(self):

server = xmlrpclib.ServerProxy(‘http://rpc.icerocket.com:10080’)

response = server.ping(self.blog_name, self.blog_url)

# print “Icerocket response : ” + str(response)

return response

def _technorati(self):

server = xmlrpclib.ServerProxy(‘http://rpc.technorati.com/rpc/ping’)

response = server.weblogUpdates.ping(self.blog_name, self.blog_url)

# print “Technorati response : ” + str(response)

return response

def _google(self):

server = xmlrpclib.ServerProxy(‘http://blogsearch.google.com/ping/RPC2’)

response = server.weblogUpdates.ping(self.blog_name, self.blog_url)

# print “Google blog search response : ” + str(response)

return response

main()[/sourcecode]

When ever the script is invoked it will get the post feed content, and create a md5 hash of it and then compare the hash against the last known hash, if they differ ping the given list of service.

This is very convenient if you have someplace to run the cron job. Even your own machine is sufficient if you can keep your machine on for at least 15 minutes after the blog post is made.

To run the script you need to python 2.4 to later and the python package hashlib. Hope you will find this useful.

Beryl – Eye candy for linux desktops

If you think Linux is boring, lacks eye candy you find in Windows (esp. Vista); you haven’t seen a Linux desktop running Beryl.

Beryl has added all the eye candy that Linux desktops lacked, now definitely it looks better than Windows XP and in my opinion better than Windows Vista as well.

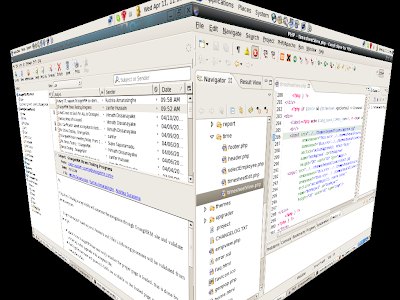

Here are some screen shots to prove it. 😉 (it is not faked)

Running: Fedora Core 6, GNOME

Desktop cube:

Rain effect (Purely eye candy):